|

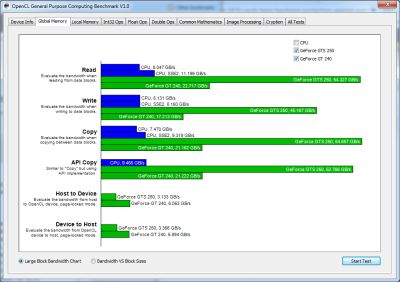

Hardware generator: Basic buliding blocks for neural networks, and address generation unit (RTL).DeepBurning: Automatic Generation of FPGA-based Learning Accelerators for the Neural Network Family.Opportunistic Turbo Execution in NTC: Exploiting the Paradigm Shift in Performance Bottlenecks.Design Methodology for Operating in Near-Threshold Computing (NTC) Region.Scalable Effort Classifiers for Energy Efficient Machine Learning.(Universtiy of Pittsburgh, Tsinghua University, San Francisco State University, Air Force Research Laboratory, University of Massachusetts.) Reno: A Highly-Efficient Reconfigurable Neuromorphic Computing Accelerator Design.Papers of significance are marked in bold. The emphasis is focused on, but not limited to neural networks on silicon. This is a collection of conference papers that interest me. A layer-based scheduling framework is proposed to optimize both system-level energy efficiency and performance.A 4-level CONV engine is designed to to support different tiling parameters for higher resource utilization and performance.This is the first work to assign Input/Output/Weight Reuse to different layers of a CNN, which optimizes system-level energy consumption based on different CONV parameters.Deep Convolutional Neural Network Architecture with Reconfigurable Computation Patterns.Hope my new works will come out soon in the near future. I'm trying to improve the architecture for ultra low-power compting. A deep convoultional neural network architecture (DNA) has been designed with 1~2 orders higher energy efficiency over the state-of-the-art works. I'm working on energy-efficient architecture design for deep learning. This is an exciting field where fresh ideas come out every day, so I'm collecting works on related topics. One of my research interests is architecture design for deep learning. For more informantion about me and my research, you can go to my homepage. degree with the Institute of Microelectronics, Tsinghua University, Beijing, China. Workshop on automatic performance tuning. Evaluating performance and portability of OpenCL programs. OpenCL Performance Evaluation on Modern Multi Core CPUs. A portable- and high-performance matrix operations library for CPUs, GPUs and beyond. Improving Performance Portability in OpenCL Programs. CUDA Occupancy Calculator NVidia, 2009.The scalable heterogeneous computing (SHOC) benchmark suite. PARTANS: An autotuning framework for stencil computation on multi-GPU systems. Auto-tuning a high-level language targeted to gpu codes.

An experimental study on performance portability of OpenCL kernels. A static task partitioning approach for heterogeneous systems using OpenCL. The Architecture and Evolution of CPU-GPU Systems for General Purpose Computing. Scalability and Parallel Execution of OmpSs-OpenCL Tasks on Heterogeneous CPU-GPU Environment.

OmpSs-OpenCL Programming Model for Heterogeneous Systems. Heterogeneous Computing with OpenCL Morgan Kaufmann.

0 Comments

Leave a Reply. |

RSS Feed

RSS Feed